Although sometimes we don’t realize it, we use algorithms in our daily lives. From computers, smartphones to space science, algorithm is an essential tool or combine of tools nowadays.

Table of Contents

What is an algorithm?

The algorithm is simply a “ recipe ” for performing a task or solving a problem. Computer science uses this tool a lot, in particular in the field of programming.

A very important aspect of algorithms is efficiency. For many problems, it is quite easy to find a procedure that ends up giving the correct answer, but the real difficulty lies in finding an algorithm that finds the answer efficiently.

If the operations of an algorithm run one after the other, it is a sequential algorithm, if they run in parallel, it is a parallel algorithm. If the algorithm exploits tasks running on a network of processors, we speak of a distributed algorithm.

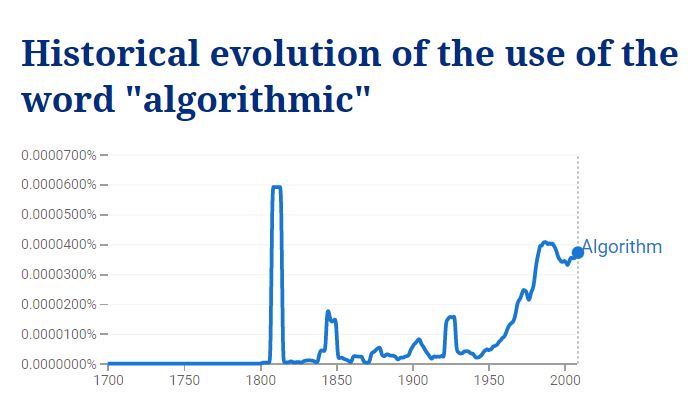

The word “algorithm” comes from the name of the mathematician Al Khuwarizmi (Latinized in the Middle Ages as Algoritmi), who in the 9th century wrote the first systematic work on the solution of linear and quadratic equations.

Example Algorithm

Alice and Charles play a game: Alice thinks of a number between 1 and 100 and Charles tries to guess it. Charles can ask for a particular number, and Alice responds if that number is the one she’s thinking or if the number is larger or smaller than she thinks.

Charles can simply ask 1, then 2, then 3, and so on until Alice answers that he is right. This algorithm is very simple and always works. But let’s compare it to this other algorithm: Charles starts by proposing the number 50. If he succeeds, the game is over.

On the other hand, if Alice answers that the number is higher, Charles will continue and propose 75. Or, if Alice answers that the number is lower, the next number that Charles suggests is 25. Charles repeats this strategy several times, in each time halving the range of possible numbers, until he unequivocally reaches the number Alice chose.

This second algorithm also finds the answer each time, but is much more efficient. Thus, with the first algorithm, Charles may have to ask up to 100 questions whereas with the second algorithm, whatever number Alice chooses, Charles will ask a maximum of 6 questions. This idea of “halving” is very common in the field of algorithms.

To sum up, algorithmics includes the study of problem solving by implementing sequences of elementary operations according to a defined process leading to a solution.

Algorithm structures

The concepts implemented in algorithms, for example according to N. Wirth’s approach for the most widespread languages (Pascal, C, etc.), are few in number, they belong to two classes:

- control structures

- sequences

- conditional

- loops

- data structures

- constants

- variables

- tables

- recursive structures (lists, trees, graphs)

This division is sometimes difficult to perceive for certain languages (Lisp, Prolog, etc.) more based on the notion of recursion where certain control structures are implicit (and therefore seem to disappear).

Some indications on the efficiency of the algorithms

Often, the efficiency of an algorithm is only known asymptotically, i.e. for large values of the parameter n. When this parameter is small enough, a higher complexity algorithm may in practice be more efficient.

Thus, to sort an array of 30 rows (this is a small parameter), it is useless to use an advanced algorithm such as Quick Sort (one of the most efficient sorting algorithms on average): most trivial sorting algorithm will be efficient enough.

Between two algorithms whose complexity is identical, we will seek to use the one whose memory occupation is the lowest.

Algorithmic complexity analysis can also be used to assess the memory usage of an algorithm. Finally, the choice of one algorithm over another must be made based on the data that is expected to be provided as input.

Thus, the Quicksort (or rapid sort), when we choose the first element as pivot, behaves in a disastrous way if we apply it to an already sorted list of values. It is therefore not wise to use it if it is expected that the program will receive lists that are already almost sorted as input.

Another parameter to consider is the locality of the algorithm. For example, for a virtual memory system which has little memory (compared to the number of data to be processed), Quick Sort will normally be more efficient than Heap Sort because the former only passes once on each element of memory while the second accesses the memory in a discontinuous way (which increases the risk of swapping).

Finally, there are some algorithms whose complexity is said to be damped.

This means that, for some executions of the algorithm (edge cases), the complexity of the algorithm will be much higher than the average case. Of course, the notion of damped complexity is only used in cases where this reaction is very marginal.