After the rise of generative AI, many companies actively introduced products, attempting to gain a share of the market. As for the AI chip leader, NVIDIA, it recently announced a new record in the MLPerf standard test for the H100 GPU, showcasing NVIDIA’s strength as a leader.

In the latest MLPerf standard test by NVIDIA, the Eos supercomputer completed the training benchmark of the GPT-3 model, involving 1 billion lines of code and 175 billion parameters, in just 3.9 minutes. This is a remarkable improvement compared to the previous version, which took 10.9 minutes for the same standard test—a threefold increase in speed, representing a significant leap forward.

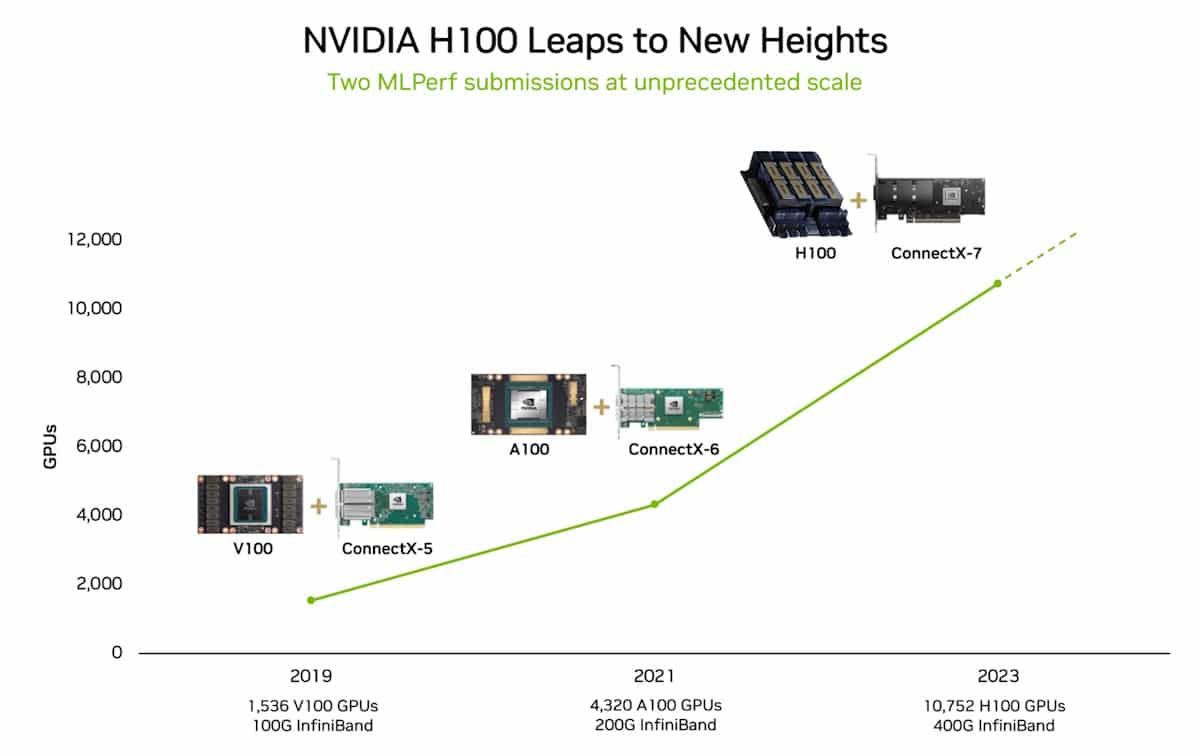

The outstanding performance of the H100 GPU is attributed, first and foremost, to the integration of NVIDIA’s top-notch Hopper GPU architecture and comprehensive software resources. In the standard benchmark test conducted on the Eos supercomputer, which utilized 10,752 NVIDIA H100 Tensor Core GPUs, replacing the older A100 model, the significant improvement in performance is a key factor.

The synergy between the advanced Hopper GPU architecture and well-developed software resources, including NVIDIA NeMo for LLM training, has maximized the potential of the platform.

Another record-breaking achievement lies in the progress of system scalability, achieving a remarkable 93% efficiency improvement through various software enhancements. The supercomputer equipped with 10,752 H100 GPUs achieves the performance equivalent to training AI in six months, showcasing the efficiency gains.

NVIDIA’s use of 3,584 Hopper GPUs underscores the importance of efficient scalability. While achieving high computational power requires additional hardware resources, without proper software support, system efficiency could be significantly compromised.