OpenAI has recently launched powerful artificial intelligence models, and recently launched Point-E, which takes the text production map to another level to a 3D stereoscopic model.

OpenAI’s previous AI image generator model DALL-E was well received, which also made related technologies a topic.

Afterwards, similar technologies such as Stable Diffusion and MidJourney deepened the discussion on text production and image technology in all walks of life.

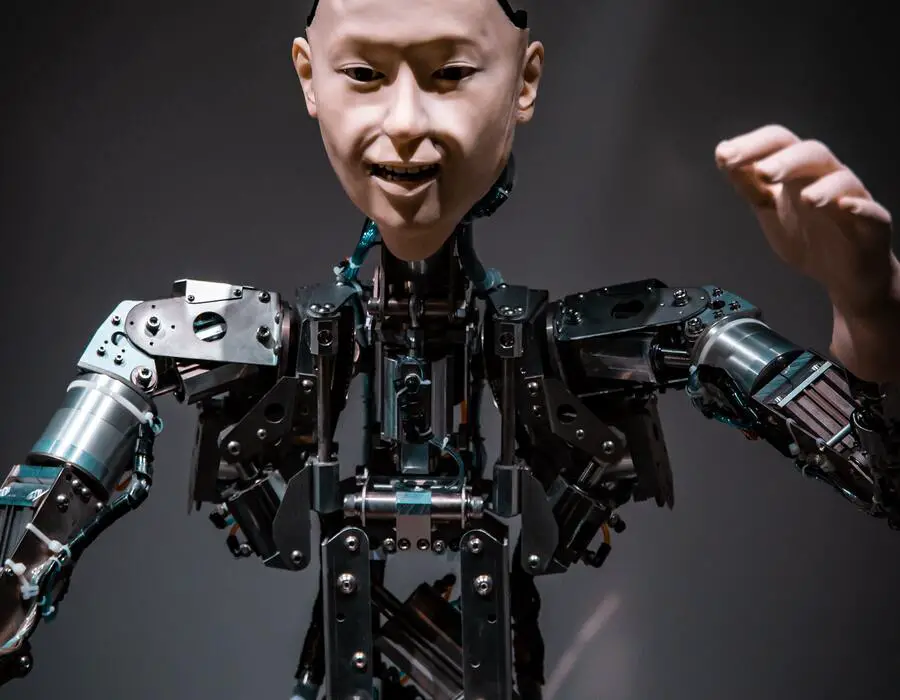

OpenAI’s Point-E this time is to allow users to input text to generate 3D models, but although the name and function are similar, the machine learning model is a different GLIDE, and the effect is not as amazing as DALL-E.

According to the Point-E team, while the sampling quality is still not state-of-the-art, a one or two level increase in sampling speed is still useful for some applications.

The 3D model can be generated within 1~2 minutes, and the traditional method takes several hours, so this time the focus is on processing speed.

Point-E is just basic research, not up to the commercial level. In the future, such technologies may assist metaverse development and lower the threshold for 3D model production.