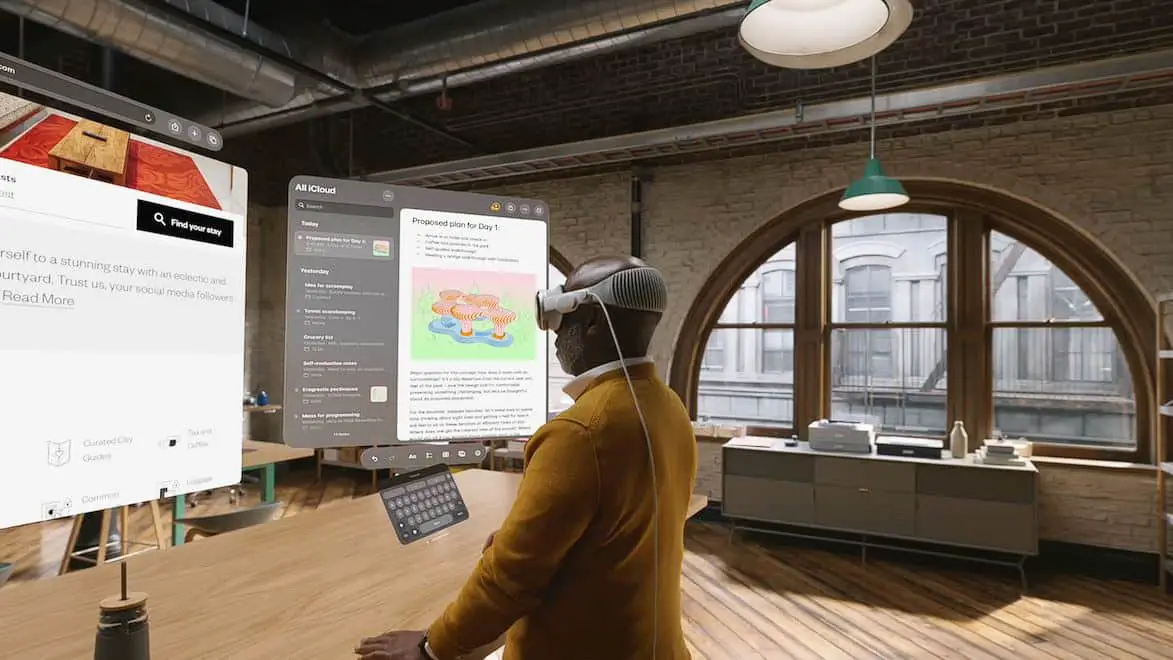

The first model of the “Spatial Computing Device” called Vision Pro, as referred to by Apple, has finally made its official debut. Prior to its official release and arrival in the hands of users, there has been much curiosity and imagination surrounding it.

With the unveiling of Vision Pro by Apple, Sterling Crispin, who previously served as a neurotechnology prototype researcher at the company, tweeted about his contributions to the development of Vision Pro and revealed the powerful technology it has yet to fully demonstrate to users.

Vision Pro seamlessly integrates digital content into the real world and features a fully 3D user interface. It can be controlled in a natural and intuitive manner through the user’s eyes, hands, or voice, as if everything can be controlled by mere thoughts. With its built-in high-efficiency eye-tracking system, Vision Pro uses high-speed cameras and projects a ring of invisible light images to the user’s eyes, enabling real-time intuitive feedback.

Sterling Crispin’s work at Apple is mostly about the user’s immersive experience, based on the data of the user’s body and brain response to detect their psychological state. Although most of the work has signed a confidentiality agreement and must not be disclosed to the public, some content has been disclosed to the public through patents.

He gave an example that Vision Pro can predict the click behavior before the user clicks something. It looks like mind reading, but it can actually be done through Vision Pro.

Vision Pro will Observe the User

When a user is in a VR (Virtual Reality) or MR (Mixed Reality) environment, the AI model tries to predict whether the user is curious, wandering, fearful, focused, recalling past experiences or Other states, these mental states can be inferred from measurements such as eye tracking, brain electrical activity, heartbeat and rhythm, muscle activity, blood density in the brain, blood pressure, skin conductance, etc.

It is definitely a daunting challenge to be able to predict the user’s click behavior. One observes that the user’s pupils respond before clicking, partly because the user expects something to happen after the click, so by monitoring the user’s eye movement behavior to establish the brain’s biofeedback and let the UI adapt to the user .

Other ways of inferring mental states, like rapidly flashing visuals or playing sounds to users, and then monitoring their reactions.

Another patent details how to use machine learning and signals from the body and brain to predict the user’s attention, relaxation or learning, and then update the virtual environment to enhance the current state, such as by changing the background. Content to help users get work done, study or relax.

However, it is worth contemplating that Apple emphasizes building Vision Pro on the foundation of privacy and security. They claim that users’ browsing content and eye-tracking information will not be shared with Apple itself, third-party websites, or services.

Data from the camera and other sensors is processed directly on the system side. Does this mean that users have nothing to worry about? The fact that users’ eyes are constantly monitored, and such intimate and real-time behavioral records, raises concerns about whether they could be secretly used for other purposes. There are voices of skepticism from the outside world.

At the end of this Apple conference, the long-rumored Vision Pro was unveiled as the “One more thing,” leading people to take a big leap from “PC computing” and “mobile computing” into the new realm of “Spatial Computing.” With some time remaining before its release next year, Apple not only needs to work hard to provide users with a compelling experience but also should provide more concrete responses to concerns about security.